S&I is a leading building management service provider in Korea. S&I has deployed Scenera’s Platform-as-a-Service (PaaS) to enable real-time monitoring of events within S&I managed locations — using existing cameras in the buildings. The solution is capable of detecting events such as intrusion, accidental falls, people fighting, loitering and left behind items. The solution also provides x-ray image analysis, to detect unauthorized devices. Real-time notifications of these events are sent to security personnel who can then respond to these events.

Visit Microsoft Customer Stories for more information on “Achieving Edge-to-Cloud Processing forDX with AI Capabilities in Facility Management”.

The Problem

Processing of camera feeds is computationally intensive. One conventional approach to addressing compute without Edge processing on the premises requires expensive bandwidth to deliver many live video feeds to the cloud. Alternatively, on-premise Edge computing can be applied to the live video feeds. However, this can be cost-prohibitive, requiring significant deployment of capable hardware to handle all the camera feeds.

The question arises whether it is possible to intelligently distribute the processing load between the Cloud and the On-Premise Edge so that lightweight processing performed at the Edge can limit, or “gate” traffic from the Edge to the Cloud. In particular, might there be an approach whereby the system can leverage lightweight and inexpensive processing algorithms on the Edge, and compensate for this by using a more intensive and accurate algorithm in the cloud when needed?

The Solution

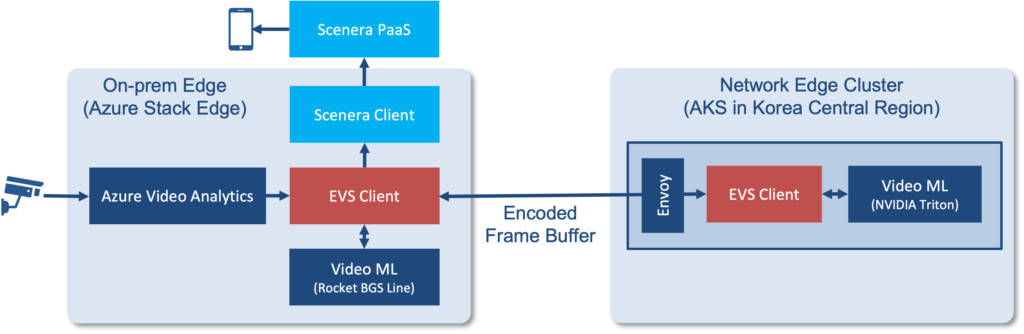

Scenera, TNM, and Microsoft worked together to deploy an architecture that allowed the distributed processing of people counting. This entailed running constrained algorithms which tend to be more inaccurate on the Edge in the customer’s premises. This enables either lower cost hardware to be used or a larger number of cameras to be processed on the Edge processing hardware.

The results from the constrained algorithm have a large number of errors. To overcome this, results with a lower certainty are forwarded to a Network Edge Cluster with a more robust and accurate algorithm. This algorithm is used to confirm whether the constrained algorithm has correctly detected a person or not.

The benefit of this approach is that it reduces the hardware costs on premises, reduces the bandwidth of video sent to the cloud while maintaining the highest possible accuracy of the results.

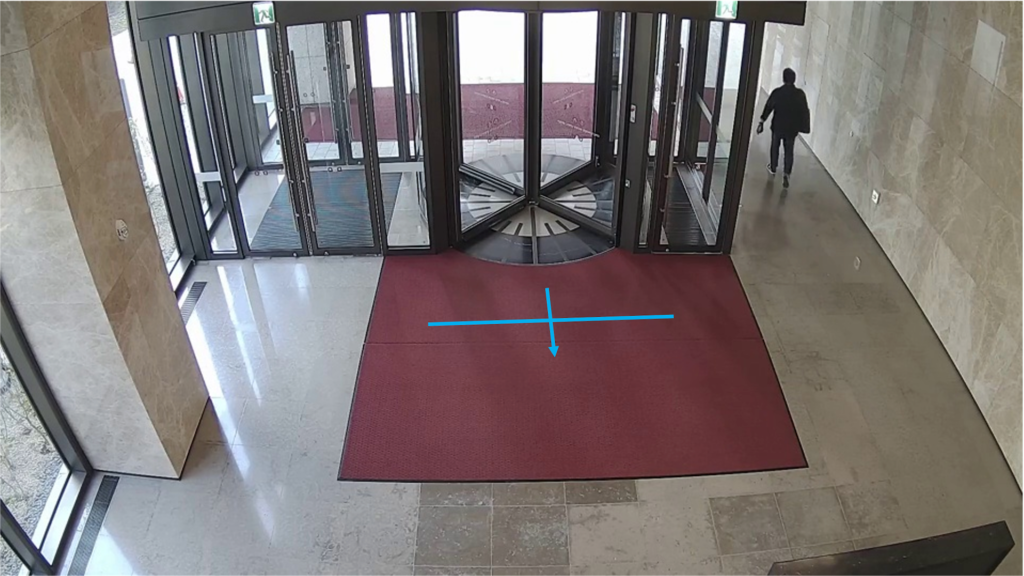

We deployed and benchmarked the solution in the S&I facility by applying the solution to four camera feeds and counting people entering turnstiles. We measured the accuracy of the solution by monitoring the results generated by the camera and compared them to events that we were able to visually inspect. Based on these measurements our accuracy was greater than 95% based on a relatively small sample of 100 images.

- Compared to a solution that shipped the encoded video to the cloud, the Edge Video System’s (EVS) split architecture transferred 22X lower data to the cloud.

- EVS’s on-premise Edge usage was minimal and only CPU-based; no GPU was used on the on-premise Edge. Also, for GPU usage, our cascaded pipeline reduced the need for the heavy AI model by two-thirds.

- The overall latency of the solution was a few 100s of milliseconds.

The distributed architecture that was implemented demonstrated a significant reduction in network bandwidth, a reduction of Edge hardware costs while maintaining a high degree of accuracy with low latency.

With all the initial testing and installations of the system at LGE’s buildings, S&I is ready to commercialize EVS in its building management application globally.

This article was published on Microsoft Customer Stories on November 1st, 2022.